A holistic approach: Intentional planning to set community data collection programs up for success

Julie Vastine, Alliance for Aquatic Resource Monitoring — Dickinson College

The impact of community-based data collection programs — for volunteers, for organizations, and ultimately for healthy environments — is significant. Effective study design and strong quality control measures sets these programs up for success, and leaders in the environmental assessment space and policymakers have an important role to play in ensuring that planning and study design get the attention these programs deserve.

Embarking on a new data collection project involving volunteer scientists is a fun and exciting adventure for both participants and project leaders. However, before volunteer scientists put their waders on and grab a water sample, it is vital for project leaders, in collaboration with a stakeholder committee, to take a step back, review goals, and develop a comprehensive plan. Thinking through crucial questions prior to starting a project can help project leaders set their program up for success. Too often, these steps are not given enough forethought and that ultimately dampens the impact of monitoring.

There are diverse best practices when working with volunteer scientists, but most programs usually touch upon these steps prior to recruiting people for their program:

- Identify a question or the need for data collection.

- Create a study design.

- Develop a volunteer engagement strategy (from recruitment to retirement).

- Make program evaluation tools.

- Assemble a quality assurance/quality control plan.

- Assess resources and determine what is needed to launch a program.

Policymakers and leaders in the environmental assessment space can advance the goals of these volunteer scientists by training them on the guidelines for effective study design. Let’s take a closer look at study design and the role of quality assurance/quality control (QA/QC) in data collection programs.

Study design is a framework where the key decisions around data collection are made. These are living documents meant to be revisited throughout the lifetime of a project to ensure that the goals are still relevant. Additionally, study designs are meant to be a participatory process. At the heart of a study design process is the community interested in data collection, typically they will assemble key stakeholders and invite a technical support provider or service provider in to facilitate the process. The study design team then answers a series of questions that define the scope of the initiative. Choosing representative voices from each step in the process (e.g. goal setters, data collectors, data users, etc.) will also improve study design and the data collection program.

Study design is also a fantastic way to set the tone for a research project. For example, to help maintain that the community is in the driver’s seat, then it is important for the facilitators and/or technical support providers to take a back seat on questions like “determining how data collection fits in with your community vision,” “why are you collecting data,” or “how will you use the data?” It is common the community partner will look to the facilitator for the answers and it is important to emphasize that this is their project, and they need to define the scope of it.

The two most robust steps in the study design process are prioritizing the question that monitoring data will hopefully answer, and determining the intended use of the data. Decisions about data use determine the data collection parameters and required methodology. It is also a good reality check to ensure that the quality of data collected aligns with potential use. For example, if data are being used for legal purposes, then it may be best to work with a certified lab. If a project seeks to screen for pollution hotspots and/or generate baseline data, then using more accessible and affordable techniques can work with the right data credibility measures.

It is important to have a technical support provider at the table when the question and data use goals are defined in order to help inform parameters and methodology choices. Not all cost-effective equipment is created equal, and thus it is key to make decisions based on equipment and field-testing experiences. This technical voice will also be helpful as community partners through the necessary quality assurance/quality control measures to help document that data are of known quality!

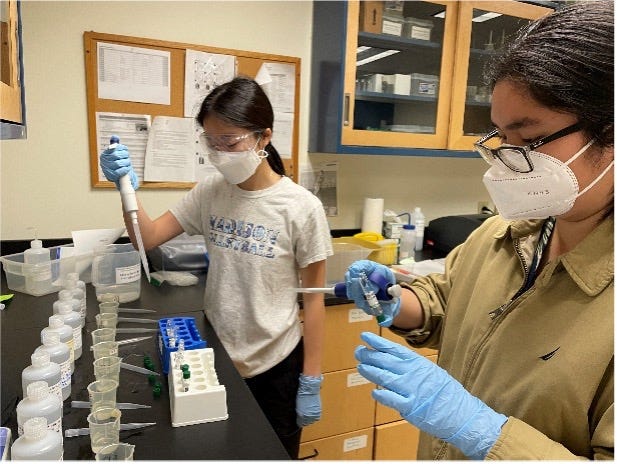

When people question the validity of volunteer collected data, it is an opportunity to highlight the diverse measures programs take to ensure that volunteers are using the equipment correctly and collecting credible data. Let’s take a common baseline indicator for water quality: nitrate-nitrogen. When developing quality control measures, we first look to Standard Methods or the National Environmental Methods Index (NEMI). These resources inform storage of samples, holding times, testing thresholds, equipment maintenance and more, all of which inform “internal quality control” measures. Another excellent internal tool are replicates! We develop the relative precision range for replicates based on manufacturer recommendations. In addition to internal measures, we also do a “duplicate sample analysis” with our volunteers where they collect two samples in the field, test one sample using their equipment and send the other sample to us to test (using both volunteer equipment as well as benchtop methodology) to determine whether or not volunteers are using their equipment correctly. This is where we can catch scenarios where volunteers might be using expired reagents or that they didn’t let their sample warm to the right temperature before conducting analysis. The Environmental Protection Agency has their Quality Assurance Project Plan guidance document for participatory science projects, which is helpful in making those decisions for any grassroots research program. EPA and USGS have done great work in creating resources like NEMI and the Quality Assurance Project Plan document.

Federal policymakers and leaders in environmental assessment space should invest more resources in communicating the importance of planning and study design. In this field, we have the unique privilege of collaborating with communities and individuals who want to make a difference with data collection and data use. Investing the right amount of time and resources into planning prior to a project launch helps to honor the time and effort of the individuals contributing to programs. More importantly, it helps ensure that that time and effort will translate into cleaner air, better water, and safer communities.